Caption Images

These models generate text descriptions and captions from images. Use them for alt text, image search, content indexing, training data preparation, and accessibility.

Models we recommend

Best for detailed captions: Claude 4.5 Sonnet

Claude 4.5 Sonnet produces the most detailed, nuanced image descriptions. It understands composition, style, mood, and context — not just what's in the image, but why it matters. Great for detailed alt text, editorial descriptions, and cases where caption quality matters more than speed.

Best all-around: GPT-5

GPT-5 combines strong visual understanding with excellent instruction following. Tell it exactly what kind of caption you want — short and punchy, detailed and technical, or structured with specific fields — and it delivers. Supports configurable reasoning effort so you can trade depth for speed.

Fastest official option: Gemini 3 Flash

Gemini 3 Flash is built for speed. It processes images quickly while still producing accurate, useful descriptions. Also understands video, so you can caption frames from video content. A great default for high-volume captioning workflows.

For complex visual analysis: GPT-5.4

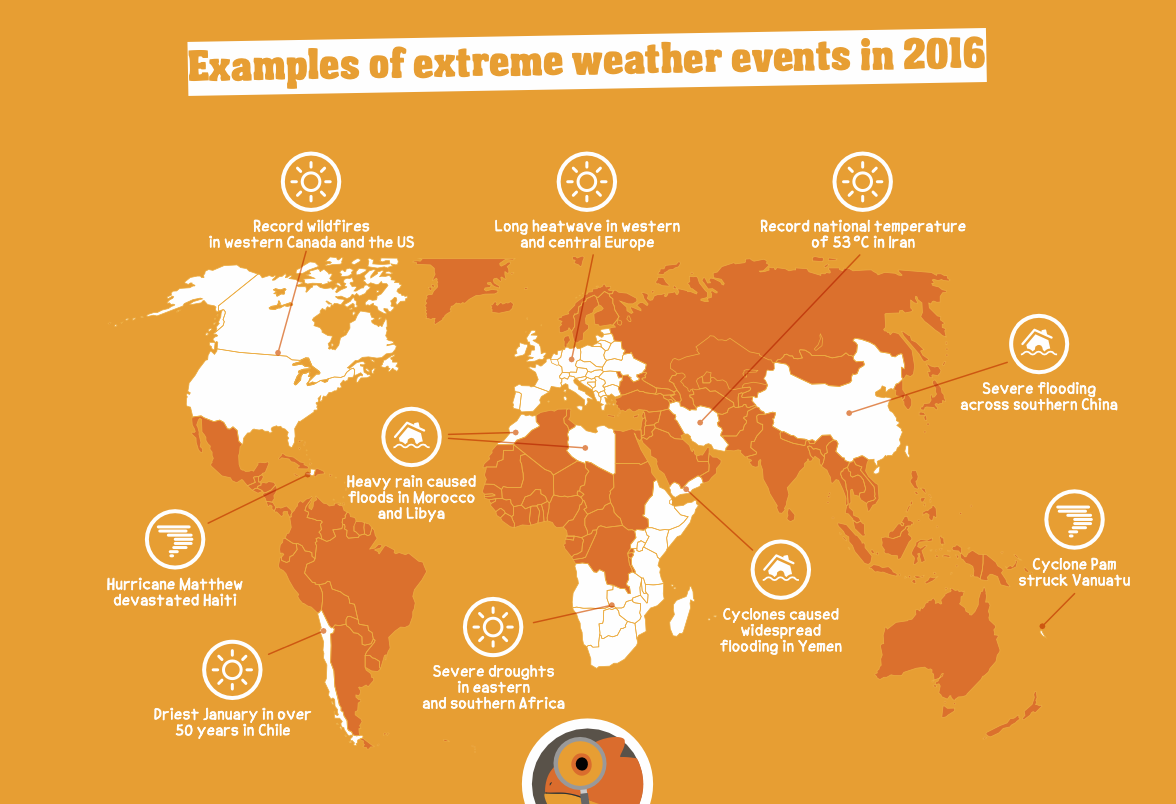

GPT-5.4 is the most capable model for analyzing charts, diagrams, documents, technical drawings, and complex visual scenes. Use it when you need more than a caption — when you need the model to reason about what it sees. Features a 1 million token context window.

Budget pick: GPT-5 Nano

GPT-5 Nano is the cheapest official option that still handles images well. Good for bulk captioning where you need accurate descriptions at scale without high costs.

Open source: Moondream 2B

Moondream 2B is a lightweight vision model you can self-host. It's fast and cheap, and produces decent captions for most common images. A good choice if you need to run captioning on your own hardware.

What you can do

- Image captioning: Generate descriptions of what's in an image — useful for alt text, search indexing, and accessibility.

- Visual question answering: Ask specific questions about an image and get natural language answers.

- Training data: Generate captions for image datasets to prepare training data for fine-tuning.

Looking for interactive visual reasoning? Check out our vision models collection →

Featured models

openai/gpt-5.4

openai/gpt-5.4OpenAI's most capable frontier model for complex professional work, coding, and multi-step reasoning.

Updated 1 month, 2 weeks ago

41.3K runs

Google's most intelligent model built for speed with frontier intelligence, superior search, and grounding

Updated 2 months, 3 weeks ago

1.4M runs

openai/gpt-5

openai/gpt-5OpenAI's new model excelling at coding, writing, and reasoning.

Updated 2 months, 4 weeks ago

1.7M runs

openai/gpt-5-nano

openai/gpt-5-nanoFastest, most cost-effective GPT-5 model from OpenAI

Updated 2 months, 4 weeks ago

10.1M runs

Claude Sonnet 4.5 is the best coding model to date, with significant improvements across the entire development lifecycle

Updated 6 months, 3 weeks ago

1.1M runs

lucataco/moondream2

lucataco/moondream2moondream2 is a small vision language model designed to run efficiently on edge devices

Updated 1 year, 8 months ago

11M runs

Recommended Models

Frequently asked questions

Which model should I use for image captioning?

For most use cases, google/gemini-3-flash is the best default — it's fast, accurate, and handles a wide range of images. For the most detailed and nuanced descriptions, use anthropic/claude-4.5-sonnet. For the cheapest option that still works well, use openai/gpt-5-nano.

Which models are the fastest?

google/gemini-3-flash and openai/gpt-5-nano are both built for speed. For a self-hostable option, lucataco/moondream2 is lightweight and quick.

Can I ask questions about an image?

Yes — all the recommended models support visual question answering. Upload an image and ask "What's in this photo?", "How many people are there?", or "Describe the lighting in this scene." openai/gpt-5 is particularly good at following specific instructions about what kind of answer you want.

What works best for complex images like charts, diagrams, or documents?

openai/gpt-5.4 is the most capable model for this — it can reason about charts, extract data from tables, interpret technical drawings, and analyze multi-page documents with its 1 million token context window.

Can I generate captions in bulk?

Yes — all these models work via API, so you can process images programmatically. For bulk captioning of training datasets, fofr/deprecated-batch-image-captioning processes ZIP archives using GPT, Claude, or Gemini.

Can I self-host a captioning model?

lucataco/moondream2 is a lightweight open-source vision model that you can run on your own hardware. It's not as capable as the official models but works well for basic captioning and tagging.

Can I use these models for commercial work?

Yes — most official models (GPT-5, Claude, Gemini) support commercial use. Check the license on each model page for specifics.